You can understand Section 230 in one sentence: it often means a website is not legally liable for what its users say, and it can still moderate without automatically becoming responsible for everything it did not remove, subject to important exceptions.

That can sound like a special deal for Big Tech. It is more basic than that. It is the legal plumbing that lets comment sections exist, lets review sites host harsh reviews, and lets platforms remove spam, threats, and pornography without taking on the legal role of a newspaper editor for every post that slips through. Outcomes can still depend on the claim, the facts, and the jurisdiction.

Join the Discussion

The plain-English meaning of Section 230(c)(1) and (c)(2)

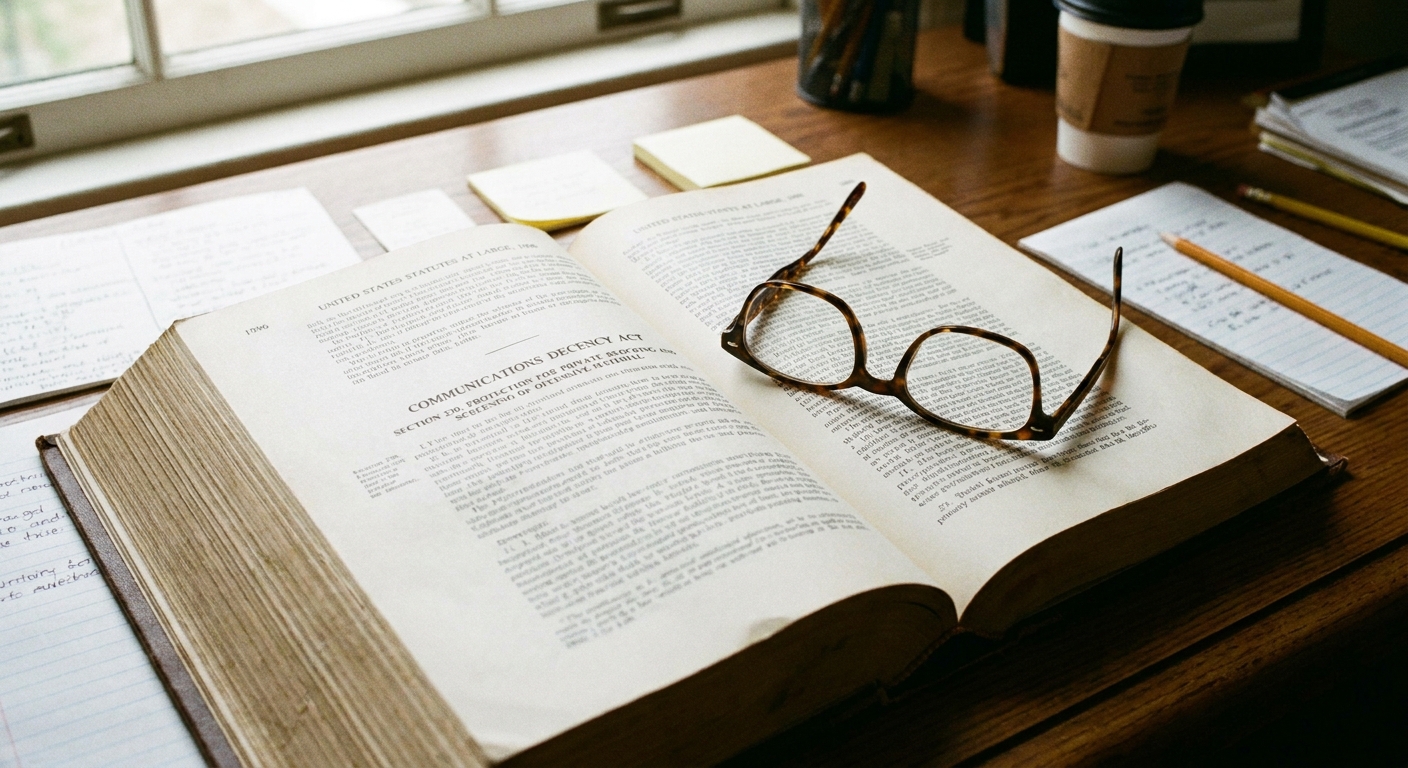

Section 230 is part of the Communications Decency Act of 1996. The two key provisions most people argue about are in 47 U.S.C. § 230(c).

230(c)(1): the “not the speaker” rule

Section 230(c)(1) says that a provider or user of an interactive computer service cannot be treated as the publisher or speaker of information provided by another information content provider.

In plain English: if a third party wrote it, you generally do not become legally responsible for it just because you hosted it.

230(c)(2): the “good-faith moderation” rule

Section 230(c)(2) adds protection for voluntary, good-faith moderation. It covers actions taken to restrict access to material the provider considers obscene, lewd, excessively violent, harassing, or otherwise objectionable, whether or not the material is constitutionally protected.

In plain English: you can remove or restrict posts you think are low-quality, dangerous, or simply not what you want on your service, and Section 230 can help shield you from lawsuits claiming you should not have taken them down. This part of the law is typically about liability for removing or limiting content, not a general rule about liability for leaving content up.

These two provisions work together. One protects hosting. The other protects moderating.

What Section 230 does not cover

Section 230 is broad, but it is not universal. A few limits show up constantly in real cases:

- You are always responsible for your own speech. If you create or materially help develop illegal content, Section 230 may not apply to that specific content or claim.

- Federal criminal law. Section 230 does not bar federal criminal enforcement.

- Intellectual property. Section 230 does not block many IP claims (like copyright and trademark) brought under relevant law.

- Sex trafficking claims (FOSTA-SESTA). Congress added a targeted carve-out related to certain sex-trafficking-related claims.

There are also edge questions that vary by legal theory and jurisdiction, especially when plaintiffs try to repackage a publishing complaint as “product design,” “failure to warn,” or other non-defamation labels.

Who counts as a “provider” or “user”?

Section 230 uses a term that sounds technical but is intentionally broad: interactive computer service. Courts have treated this as covering many internet intermediaries, including:

- social media platforms

- forums and message boards

- comment sections on news sites

- review sites and marketplaces that host user listings

- many email services and hosting services, depending on the claim

Two words matter here:

- Provider: the service running the site or platform.

- User: often includes individual account holders who republish someone else’s content, depending on the situation.

Section 230 is not only for household-name platforms. It is also for the small town newspaper website that lets readers argue under an article, and for the niche hobby forum run by volunteers.

Hosting vs. your own content

Section 230 is often described as “immunity,” but it is more accurate to say it blocks a category of claims that try to treat a host as the publisher of someone else’s words.

Here is the key distinction:

- Hosting user content: If a user posts a defamatory review, Section 230 often prevents the target from suing the website for defamation based on that user’s words.

- Creating or developing content: If the website itself writes the defamatory statement, Section 230 does not help. You are always responsible for your own speech.

Courts draw this line using the statute’s companion definition: an information content provider is someone “responsible, in whole or in part, for the creation or development” of the content.

That “in part” is where many modern cases live. If a platform materially contributes to what makes the content unlawful, a court may treat it as partly responsible and deny Section 230 protection for that specific claim.

Quick examples

Concrete scenarios help show what Section 230 is doing.

- Defamatory review: A user posts “This dentist commits insurance fraud” on a review site. The dentist can usually sue the user. Section 230 often blocks suing the site for defamation based on the user’s allegation.

- Marketplace listing: A seller posts a listing for a prohibited item. If the claim against the marketplace is basically “you should not have hosted that listing,” Section 230 is often central. If the marketplace helped create the illegal listing content or required unlawful details, the analysis can change.

- Forum repost: A user copies a rumor from elsewhere and reposts it in a forum thread. Section 230 can protect the forum for hosting it. The reposting user may or may not be protected depending on the claim and their role, but “user” protection can matter in some circumstances.

- Edge case: A platform prompts users with a template that pushes them to add unlawful content, or it materially edits posts to add the unlawful part. That can look less like hosting and more like developing the illegality, which can weaken or eliminate Section 230 protection for that claim.

Common myths

Myth 1: “Section 230 lets platforms censor people, so it violates the First Amendment.”

The First Amendment limits government action, not private editorial decisions. A private platform deciding what it will host is generally exercising its own speech and association rights, not violating yours.

Section 230 does not give the government a censorship button. It limits private civil liability in specific situations. Even without Section 230, the First Amendment would still not require a private website to carry your post.

Myth 2: “Platforms get 230 only if they are ‘neutral.’”

Nothing in Section 230 requires political neutrality. The statute was written to encourage moderation, not to punish it. Courts have repeatedly rejected the idea that a service loses Section 230 protection just because it takes sides, sets rules, or curates.

That said, some disputes turn on a different question: whether the platform’s own conduct or content crosses into “development” of what is illegal, for example through prompts, templates, rankings tied to unlawful criteria, or paid placement that materially contributes to the alleged wrongdoing.

Myth 3: “Publisher vs. platform is a legal switch.”

You will hear people argue that once a site moderates, it becomes a “publisher,” and therefore should be liable for everything. That sounds intuitive, but it misunderstands what Section 230 changed.

Defamation law traditionally treats decisions like whether to remove content as “publisher” functions. Section 230(c)(1) says that even if those functions look like publishing, the host still is not treated as the publisher or speaker of third-party information for purposes of many claims.

Myth 4: “Section 230 protects criminal conduct.”

Section 230 has explicit limits. It does not bar federal criminal prosecution. It also does not prevent enforcement of intellectual property law, and Congress has added targeted carve-outs over time, including for certain sex-trafficking-related claims. Section 230 is broad, but it is not a general permission slip for crime.

How courts apply Section 230

Many Section 230 disputes are decided early, because defendants argue the case should be dismissed before expensive discovery. Courts often evaluate the issue on a motion to dismiss, though that depends on the claim and what facts are disputed.

Courts tend to ask three practical questions:

- Is the defendant a provider or user of an interactive computer service?

- Is the plaintiff trying to treat the defendant as the publisher or speaker? Many claims are styled as negligence, product liability, or failure to warn, but still rest on “you should not have hosted that post” or “you should have taken it down.”

- Was the information provided by another information content provider? If the harmful content is truly third-party content, Section 230 often applies. If the defendant helped create or develop the illegality, it may not.

This framework is why Section 230 is so powerful in practice. A lawsuit that depends on “they hosted user speech” often ends early, because the statute can stop the claim at the doorstep.

That does not mean plaintiffs never win. Cases can turn on whether the platform’s own conduct is the source of liability, whether a statutory exception applies, or whether the claim is genuinely about something other than publishing third-party content.

Section 230 and the First Amendment

Section 230 is not the First Amendment. It is a statute, passed by Congress, that can be revised by Congress. The First Amendment is constitutional law that binds the government.

But they interact.

- Section 230 reduces liability pressure that might otherwise cause platforms to over-remove speech or shut down interactive features entirely. Congress said, in effect, that the internet should not work like defamation law applied to a printing press.

- The First Amendment still matters because platforms and publishers have their own rights to decide what they will publish. Even if Section 230 were narrowed, many moderation choices would still be protected from government interference.

Think of Section 230 as a policy choice about how much private civil liability we want to attach to hosting speech. Think of the First Amendment as a limit on what government can compel or punish in the realm of speech.

Why Section 230 is a political lightning rod

The statute was written in 1996, when “interactive computer service” mostly meant early web forums and dial-up services. Today it governs the rules of engagement for platforms that can shape elections, culture, and commerce.

That mismatch fuels the debate. Critics argue Section 230 overprotects platforms that profit from engagement, including harmful engagement. Defenders argue that without Section 230, the legal risk of hosting user content would push sites to either:

- remove far more speech, including lawful speech, to reduce risk

- stop hosting user content altogether, especially for smaller sites that cannot afford constant legal review

Both sides are reacting to the same reality: modern platforms are not passive bulletin boards, but they also are not traditional publishers.

FAQ

Does Section 230 force a platform to host my post?

No. Section 230 is not a must-carry rule. It is a liability shield. A private platform generally can set rules and remove content, subject to contract terms and certain specific laws.

Can a website moderate without losing Section 230 protection?

Often, yes. Section 230 was designed to encourage moderation. Under 230(c)(2), good-faith efforts to restrict content a service finds objectionable can be protected. Under 230(c)(1), a platform can still avoid being treated as the publisher of third-party content even if it engages in moderation and curation. Outcomes can depend on the claim and the facts.

If someone defames me online, who can I sue?

You can generally sue the speaker, meaning the person who created the defamatory statement. Section 230 often blocks suing the site that merely hosted that third-party statement. Whether the host can be sued depends on whether the claim tries to treat it as the publisher or speaker, whether the host helped create or develop the unlawful content, and whether an exception applies.

Does Section 230 apply to federal crimes?

No. Section 230 does not bar federal criminal enforcement. It is primarily a shield against many civil claims and certain state-law theories that treat hosts as publishers of third-party content.

What are the main ideas in current federal reform debates?

Reform proposals vary, but common themes include narrowing protection for certain categories of harm, conditioning protections on particular moderation practices, or creating clearer rules for when platform recommendations and algorithms count as the platform’s own conduct. Courts have often treated ranking and recommendations as protected when they are functions applied to third-party content, but plaintiffs continue to test theories that certain designs or recommendation systems materially contribute to illegality. This area is still developing. Any change to the statute would be legislative.