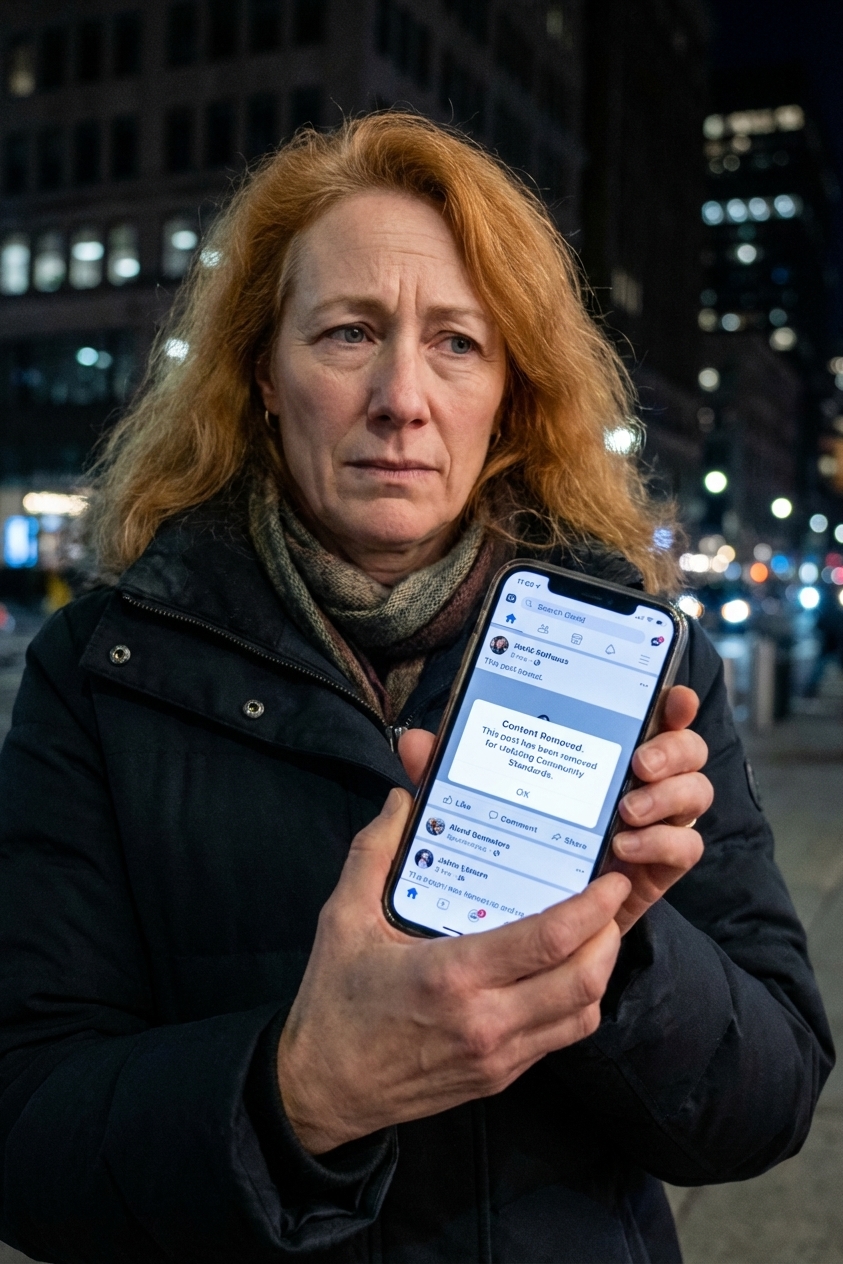

You posted a political take. It got removed. Your account got flagged, throttled (downranked or given less reach), or suspended.

Then comes the sentence everyone reaches for like a constitutional shield: “That’s a First Amendment violation.”

Sometimes it is.

Most of the time, it is not. But there are recurring edge cases, especially when government officials pressure platforms, coordinate removals, or when an official uses a social media page as part of the job.

The reason comes down to one of the most important, least understood rules in constitutional law: the First Amendment restrains the government, not private platforms.

The First Amendment in one sentence

The First Amendment says, in part, that Congress shall make no law abridging the freedom of speech or of the press.

That sounds broad. In practice, it is targeted. The Amendment is a limit on state power. Through the Fourteenth Amendment, it applies to state and local governments too. But the basic structure remains the same: the First Amendment is about what government officials can do to your speech.

That is why you can criticize the President, mock the police, protest at city hall, and publish an op-ed attacking your governor. The government cannot punish you for protected speech simply because it dislikes what you said.

But a private company running a private platform is not automatically bound by that rule.

State action: the doctrine that decides almost everything

If you want the First Amendment to apply, you need a threshold condition: state action.

In plain terms, a constitutional free speech claim requires that the censorship be done by the government, or by someone acting as the government.

What counts as state action?

Courts use multiple tests, but they orbit the same idea: is the challenged decision fairly attributable to the state?

- Direct government action: A law banning certain posts, a police order to remove content, a government agency pressuring a platform with threats.

- Government official acting in an official capacity: An agency runs an official page and blocks users from commenting because they disagree.

- Private actor doing the government’s job: Rare, but possible when a private party is effectively performing a traditional, exclusive government function.

- Coercion or significant encouragement: For example, an agency threatens enforcement, investigations, permits, or funding unless a platform removes content. Or a formalized government takedown portal is paired with implied penalties for noncompliance.

- Joint participation: For example, officials and a platform coordinate removals in a way that looks less like “tips” and more like shared decision-making.

What usually does not count?

- Private moderation decisions: A platform removes your post for misinformation, harassment, nudity, or hate speech, even if it feels political.

- Terms of service enforcement: Even inconsistent or unfair enforcement is typically not state action.

- Platforms responding to public criticism: A senator complains on TV and the platform changes policy. That is politics, not necessarily coercion.

This doctrine is why the most common modern First Amendment misunderstanding exists. People feel censored, and their speech is being restricted. But being restricted by a private gatekeeper is not the same thing as being censored by the state.

Moderation vs. censorship

To users, the experience is identical: your speech disappears.

Legally, it matters who made it disappear.

Government censorship (First Amendment territory)

- A city threatens to revoke a business license unless a website removes criticism of the mayor.

- A state agency orders platforms to take down posts criticizing an election process.

- A public university may discipline a student for off-campus political speech, where the First Amendment often applies, but the outcome can depend on the context and the school’s role.

Private moderation (usually not First Amendment territory)

- Facebook removes a post for violating community standards.

- YouTube demonetizes a channel.

- X suspends an account for harassment or platform manipulation.

- TikTok limits distribution of content it flags as sensitive.

None of those actions are automatically admirable. But they are usually not unconstitutional either, because the Constitution is not a general “fairness code” for private companies.

Can platforms be state actors?

Sometimes users argue that social media is like a public square, so it should be required to host everyone’s speech.

It is a powerful metaphor. It is also usually a losing legal theory.

Courts have been cautious about labeling private platforms as state actors simply because they are big, influential, or widely used. Being essential to public conversation is not the same thing as being the government.

There are narrow situations where government involvement could transform a moderation decision into state action, but they typically require evidence of coercion, significant encouragement, or joint participation by government officials.

That fact pattern exists sometimes. It is just not the default case when your post gets removed.

The platform has speech rights too

There is a second misconception hiding underneath the first: that the First Amendment gives you a right to use someone else’s platform to reach an audience.

But the First Amendment also protects the rights of speakers and publishers to decide what to carry. In the traditional media world, that is obvious. A newspaper can refuse to run your letter to the editor. A TV station can decline to air your ad.

Platforms argue that their moderation choices are a form of editorial judgment, and that forcing them to host speech they do not want is itself a First Amendment problem.

That idea is at the center of the current wave of state social media laws.

State laws targeting moderation

In the last few years, several states have attempted to limit how platforms moderate content, especially political speech.

The basic pitch is simple: if platforms are today’s public square, they should not be allowed to “discriminate” against viewpoints by banning or demoting content.

The constitutional counterargument is also simple: platforms are private entities, and forcing them to carry speech is compelled speech.

That clash has produced some of the most closely watched First Amendment litigation in the country.

NetChoice and what the Court signaled

Texas (and separately Florida) passed laws aimed at limiting how large platforms can moderate or remove content. Trade groups, including NetChoice, challenged those laws.

These cases reached the Supreme Court amid a basic disagreement: are the laws regulating platforms like common carriers, or are they interfering with editorial discretion?

NetChoice v. Paxton became shorthand for the broader question: can a state force a social media platform to host speech it wants to remove?

In 2024, the Supreme Court did not issue a clean, final merits rule that “platforms win” or “states win” across the board. Instead, it emphasized careful, platform-specific First Amendment analysis and, in significant part, sent the cases back for further proceedings (vacating and remanding key decisions along the way).

The takeaway for ordinary users is not that you suddenly gained a First Amendment right to be hosted. It is that the Court is treating platform moderation as something that can implicate the platform’s First Amendment rights.

That makes state attempts to ban moderation more constitutionally complicated than many headlines suggest.

And it reinforces the basic point: your dispute is often not “you versus the Constitution.” It is “you versus a private publisher with its own constitutional protections.”

So what rights do you have?

You have real rights online. They are just not always the ones people assume.

What you generally do have

- First Amendment protection from government punishment: The government generally cannot prosecute you for protected speech posted online, the same as offline.

- Protection against government retaliation: A public employer, school, or agency can run into First Amendment limits if it penalizes protected speech, depending on context.

- First Amendment public-forum limits on official government pages: If a government official uses a social media page as an official tool, blocking critics can become a constitutional issue under the “public forum” line of cases.

- Contract-based expectations: You can sometimes argue a platform violated its own terms or promises, although terms usually give platforms broad discretion.

What you generally do not have

- A constitutional right to a platform account: Being banned from a private platform is usually not a First Amendment violation.

- A constitutional right to reach an audience: The First Amendment restricts government interference, not private distribution choices.

- A right to equal treatment by a platform: Platforms can be inconsistent, biased, or arbitrary without becoming state actors.

When a First Amendment claim is real

There are recurring scenarios where “I was censored online” can move from frustrating to constitutionally significant.

1) An official page blocks you

If a public official uses a page to announce policy, take questions, and conduct official business, blocking critics can trigger First Amendment scrutiny.

The Supreme Court addressed a version of this issue in Lindke v. Freed (2024), emphasizing that the key question is whether the official acted with governmental authority and used the page as an instrument of office. Not every account run by an officeholder is “state action,” but some are.

2) Government pressure on a platform

If officials threaten consequences, coordinate takedowns, or use regulatory power to strong-arm moderation, the state action problem looks different.

The Supreme Court’s decision in Murthy v. Missouri (2024) turned largely on standing and remedies. In broad strokes, the Court concluded the plaintiffs lacked standing for much of the forward-looking relief they sought, even as the underlying principle remains: government coercion disguised as “requests” can raise First Amendment issues in the right factual setting.

3) Joint action with government

This is harder to prove. But if the government is effectively directing decisions, supplying lists in a way that amounts to control, or making removal a condition of avoiding legal trouble, plaintiffs may argue joint action.

Practical steps

Legal recourse depends on who did what. Here is the most useful way to triage the situation.

Step 1: Identify the actor

- Private platform decision? Your options are mostly internal appeals, public pressure, and contract or consumer-law theories.

- Government official or agency? You may have a First Amendment claim, especially if you were blocked, threatened, or punished for speech.

Step 2: Preserve evidence

- Screenshot the removal notice, the post, timestamps, and any policy citations.

- Save URLs, account IDs, and any emails.

- If government involvement is suspected, document statements by officials that mention takedowns or “requests.”

Step 3: Use platform processes first

- Appeal the moderation decision through the platform’s tools.

- Request a specific policy explanation.

- If the platform has an oversight mechanism, use it.

Step 4: Consider non-constitutional claims

Depending on your state and facts, possible legal angles can include:

- Contract claims: Did the platform violate its own terms or a specific promise?

- Consumer protection claims: Did the platform misrepresent how moderation works?

- Employment or school claims: If speech consequences came from an employer or school, different legal rules apply.

- Defamation or harassment: If your speech was removed because of false reports or coordinated harassment, the remedy may be against the harassers, not the platform.

Step 5: If government action is involved, talk to a lawyer early

First Amendment claims often hinge on precise facts: whether a page is “official,” whether a request was coercive, whether you have standing, and whether qualified immunity applies to officials. If a government entity or official played a role, consult a civil rights attorney. Many First Amendment suits are brought under 42 U.S.C. § 1983, the federal statute that allows people to sue state officials for constitutional violations.

A quick Section 230 note

No modern moderation explainer is complete without this: Section 230 is the federal law that generally shields platforms from liability for user posts and also protects platforms for good-faith decisions to remove or restrict content. It is not a First Amendment rule, and it does not give you a right to be hosted. It is more like the legal background that makes large-scale moderation possible.

A reality check that still matters

If you were banned by a private platform, you may have been treated unfairly. But unfair is not the same thing as unconstitutional.

The First Amendment is a limit on government power. Your most important online rights often turn on a less dramatic question than “free speech”: who, exactly, made the decision?

Note: This is general information, not legal advice.